|

SUMMARY:

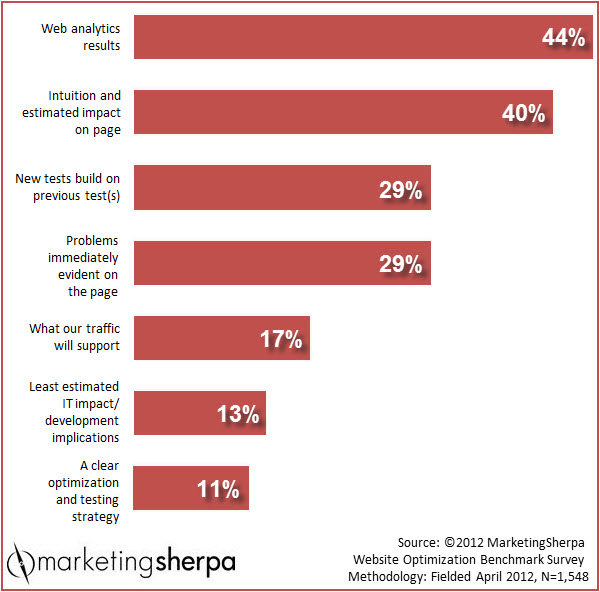

Once some marketers start engaging in A/B testing and see the results they can achieve from learning what messaging works best with their customers, they want to test everything.

Of course, you can't test everything. We're all limited by budgets, resources, time and other factors. In this week's MarketingSherpa Chart of the Week, we'll take a look at the factors that drive marketers' testing decisions. |

Click here to see a printable version of this chart

Test your website with a real customer and actually sit next to him or her to see what [they] think and do when going through the website. This reveals very valuable information regarding the effectiveness of the site. Keep pages simple — little text, no special effects around informational elements. [For example,] we had images that will slide over to uncover a product display upon mouseover. Turned out [the] majority of visitors didn't know [about] this interactive element and left the website without ever noticing the products.

Get Better Business Results With a Skillfully Applied Customer-first Marketing Strategy

The customer-first approach of MarketingSherpa’s agency services can help you build the most effective strategy to serve customers and improve results, and then implement it across every customer touchpoint.

Get More Info >MECLABS AI

Get headlines, value prop, competitive analysis, and more.

Use the AI for FREE (for now) >Marketer Vs Machine

Marketer Vs Machine: We need to train the marketer to train the machine.

Watch Now >Live, Interactive Event

Join Flint McGlaughlin for Design Your Offer on May 22nd at 1 pm ET. You’ll learn proven strategies that drive real business results.

Get Your Scholarship >Free Marketing Course

Become a Marketer-Philosopher: Create and optimize high-converting webpages (with this free online marketing course)

See Course >Project and Ideas Pitch Template

A free template to help you win approval for your proposed projects and campaigns

Get the Template >Six Quick CTA checklists

These CTA checklists are specifically designed for your team — something practical to hold up against your CTAs to help the time-pressed marketer quickly consider the customer psychology of your “asks” and how you can improve them.

Get the Checklists >Infographic: How to Create a Model of Your Customer’s Mind

You need a repeatable methodology focused on building your organization’s customer wisdom throughout your campaigns and websites. This infographic can get you started.

Get the Infographic >Infographic: 21 Psychological Elements that Power Effective Web Design

To build an effective page from scratch, you need to begin with the psychology of your customer. This infographic can get you started.

Get the Infographic >Receive the latest case studies and data on email, lead gen, and social media along with MarketingSherpa updates and promotions.