by

Paul Cheney, Senior Partnership Content Manager

Consumer Reports is the most well-known consumer advocacy organization in the U.S. Enlisting the help of the MarketingSherpa Summit audience, the team at Consumer Reports increased revenue per donation by 32%.

THE CHALLENGE

"This may actually be the largest collaborative A/B test on the planet," Austin McCraw, Senior Director of Content Production, said from the stage.

He was speaking to a crowd of around 1200 marketers at the Bellagio in Las Vegas during MarketingSherpa Summit 2016 in February.

The test he was referring to was an email test for Consumer Reports.

Many of us are familiar with the Consumer Reports' magazine and website, but some might not be as familiar with the fact that Consumer Reports is a nonprofit.

Consumer Reports formed as an independent nonprofit organization in 1936. It serves consumers through unbiased product testing and ratings, research, journalism, public education and advocacy.

- Thousands of products are tested each year in its 50 state-of-the-art labs and 327-acre automotive testing track.

- It engages with more than 1 million online activists to pass consumer protection laws in states and in Congress. It also holds corporations that do wrong by customers accountable, and encourages companies that are heading in the right direction.

- Surveys of more than 1 million subscribers capture vital feedback on purchasing decisions, experiences and shopping habits, helping us further our work to inform and protect.

Joining McCraw on the stage to give more context to the audience was Dawn Nelson, Director of Fundraising, and Bruce Duesterhoeft, Program Manager of Online Fundraising, both from Consumer Reports.

"… Because we don't accept advertising," Nelson said to the crowd, "and … we are purchasing all the products we buy — it's [a] pretty expensive business ... while we get a fair amount of revenue from the subscriptions … it's not quite enough to cover all our costs, so to bridge that gap — which is about a 10% gap — we rely on our subscribers to give us an additional, optional donation."

Those donations are heavily reliant on Consumer Reports' membership program which is, in turn, heavily reliant on email as a marketing channel.

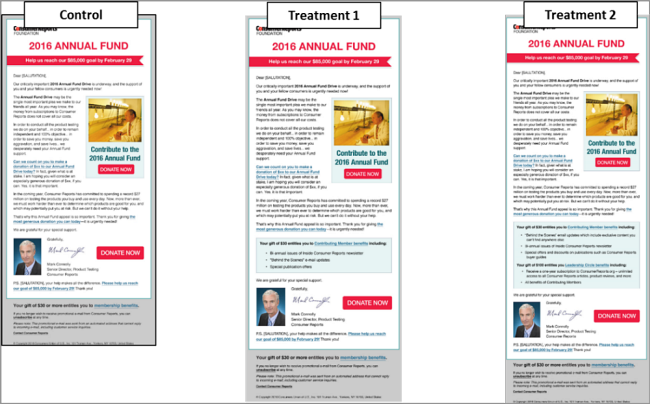

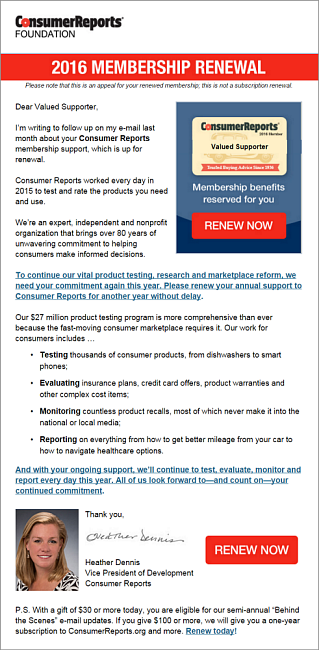

The email that would be sent for the test at Summit was

the Control.

Click here to see the full version of this creative sample

This email was to be sent to approximately 450,000 people. The average historic clickthrough rate is .54%.

The challenge was to increase that clickthrough rate and, ultimately, to achieve more revenue from donations.

"Pick a winner," Duesterhoeft said to the audience.

THE CAMPAIGN

Before the test from the stage at the Summit, the team ran a pre-test to gather some insight on the Consumer Reports donor base.

Consumer Reports had a prior email that they tested with the same messaging as the Control for the Summit test.

"It's very similar in terms of the approach and the design of the email," McCraw said.

The Pre-Test: Is the value proposition too unfocused?

Click here to see the full version of this creative sample

When the team analyzed it, they realized that there was a mixed focus regarding the value proposition.

In

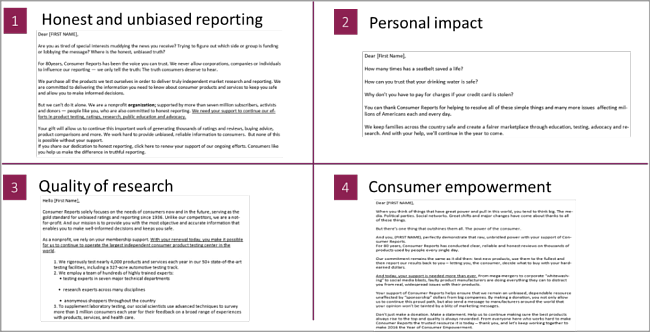

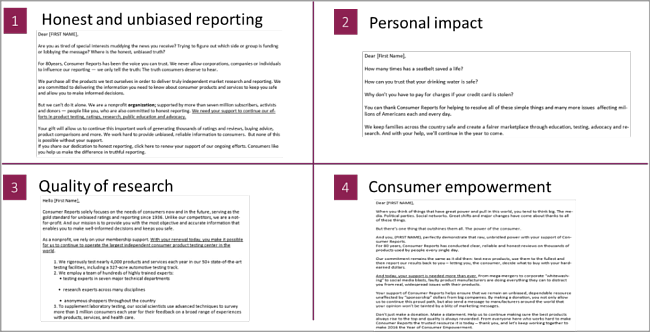

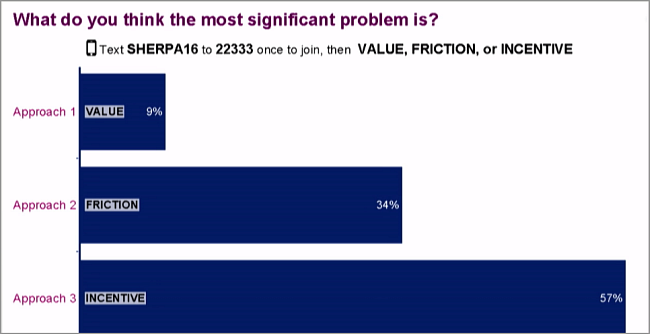

this first test, the team hypothesized that the value proposition of the Control email didn't focus enough on one aspect of the value proposition. With that in mind, the team created three potential value focuses. Each value proposition focus answered a critical question in the team's mind:

What was the best reason to give to Consumer Reports?

- Value Proposition Focus #1: Because of Consumer Reports' honest and unbiased reporting?

- Value Proposition Focus #2: Because of the personal impact Consumer Reports is having?

- Value Proposition Focus #3: Because of the unparalleled quality of Consumer Reports' research?

"We were really honing in on the value proposition," McCraw said, describing the categories for the test.

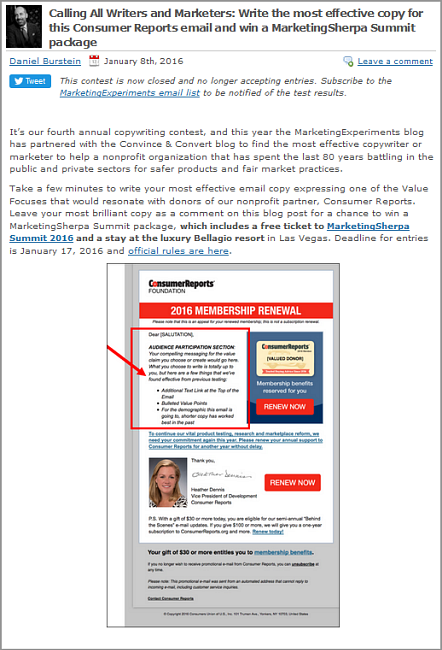

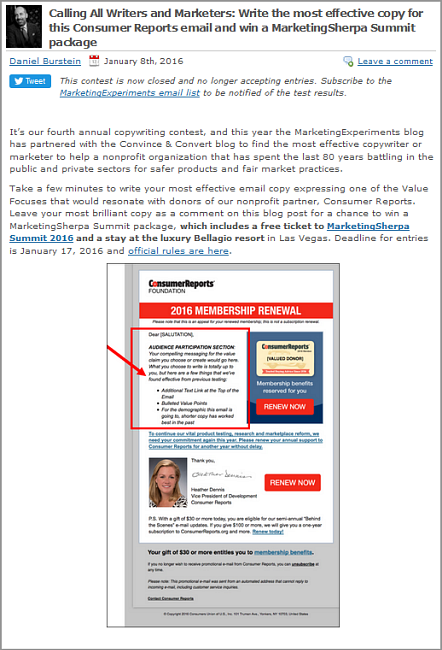

Approach: Crowdsourcing the email copy

To answer these questions, the team worked with MarketingExperiments and Convince & Convert

blog audiences.

Click here to see the full version of this creative sample

They received over 54 submissions. Each of the submissions fell into one of the value categories determined by the team. After doing some statistical modelling, however, the team realized they could run a fourth treatment.

The final Treatment's

value category was chosen by the audience as well.

Click here to see the full version of this creative sample

With the final treatments done, the team ran

the test.

Click here to see the full version of this creative sample

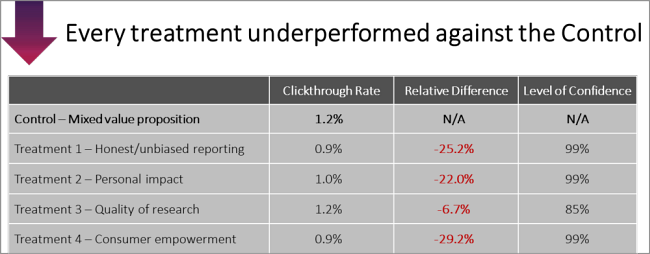

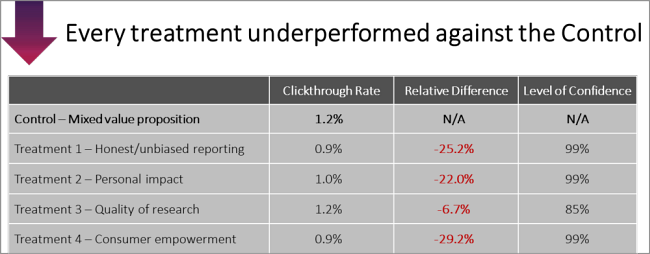

Results: The crowd suffers a setback

It turned out that the crowd didn't win — in any of the treatments. "Every single one of the treatments," McCraw said, "

underperformed the Control."

Click here to see the full version of this creative sample

Hypothesis: Three potential reasons why the treatments lost

The discovery from the test was even more interesting than the results. The team hypothesized that there were a few potential reasons for the loss.

- Reason #1: Perhaps focusing on just one element of the value proposition alienates certain groups of donors.

- Reason #2: Perhaps there is some unnecessary friction in the layout/structure of the copy.

- Reason #3: Perhaps there is confusion around what the actual member benefits are and potential donors are most motivated by learning about the specific member benefits.

The Summit Test: Are membership benefits too de-emphasized?

Taking those potential reasons, the team again converted them into options for the next test's treatments.

- Emphasizing (not Isolating) Value: Treatments will feature copy that emphasizes (not isolates) a particular element of the value proposition.

- Reducing Friction: Treatments will feature copy that is simplified, shorter and easier to digest.

- Explaining Member Benefits/Incentives: Treatments will feature copy that brings greater clarity to the actual benefits of becoming a donor/member.

Approach: Crowdsourcing the email copy again

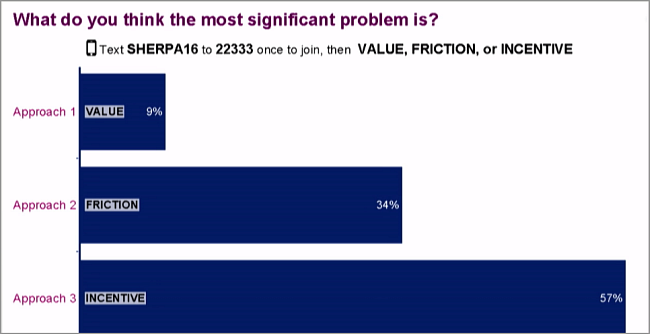

This time, with the Summit audience rather than the blog audience, the team gave the crowd another chance. The first task was for the audience to choose the test category from those above.

Put to a

live survey, the audience chose the third option (explaining member benefits/incentives).

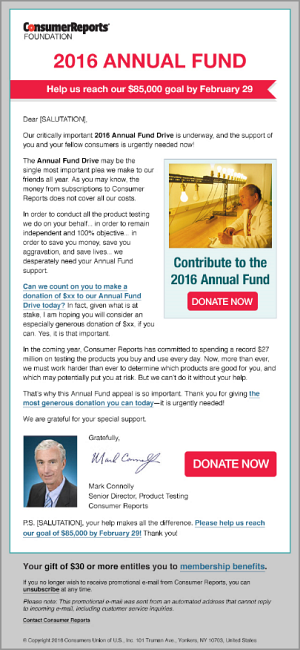

Click here to see the full version of this creative sample

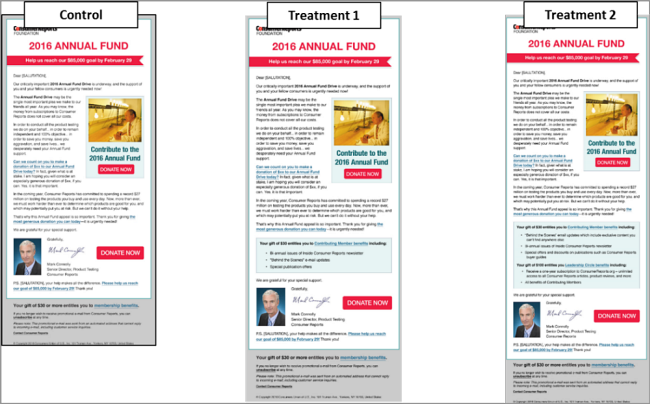

The next task was to choose the actual treatments. This time, because of the smaller changes, the team could only run two treatments against the Control.

The Summit audience was presented with

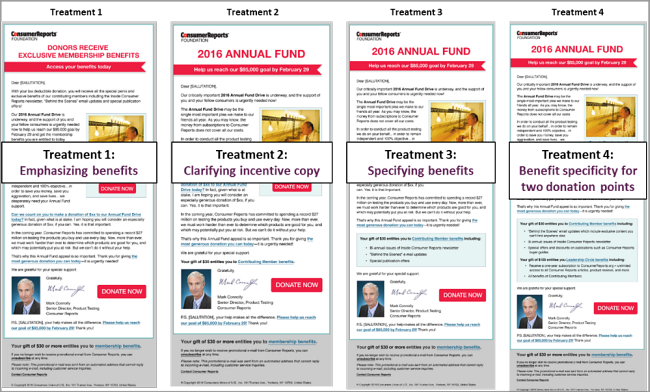

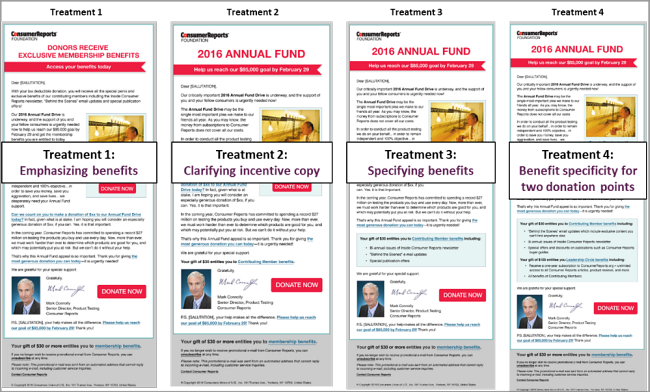

four treatments to choose from:

Click here to see the full version of this creative sample

McCraw gave four quick summaries of each of the treatments:

- Treatment 1: "All we're doing in Treatment 1 is adding a paragraph that introduces and talks about those member benefits."

- Treatment 2: "All we're going to do is move that membership benefits link up into the body of the email."

- Treatment 3: "We're going to have a call-out-box that describes the benefits that they're going to get."

- Treatment 4: "Now we're really fleshing out not just what you get but the tiers of membership and what comes along with those different tiers."

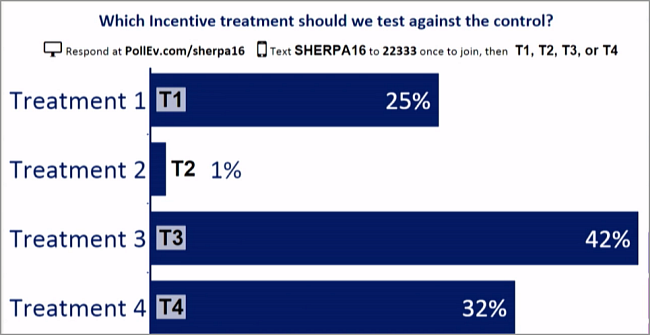

By

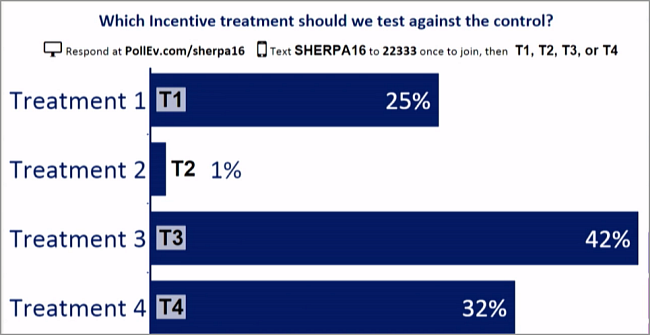

polling the audience again, the treatments were chosen:

Click here to see the full version of this creative sample

Treatments 3 and 4 won. And, with that,

the test was ready to be sent to the Consumer Reports list. But in order to get the results, the Summit audience needed to wait until the next morning.

Click here to see the full version of this creative sample

The Final Results: The crowd (ultimately) prevails

The next day, the results of the test were in.

"I have some good news and some bad news," McCraw said.

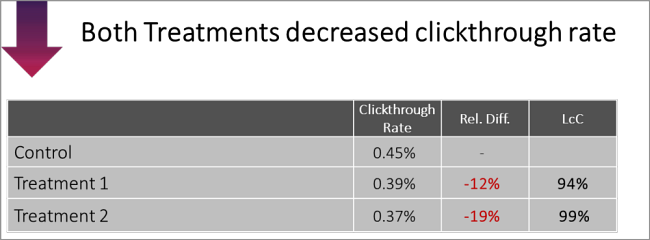

At first glance

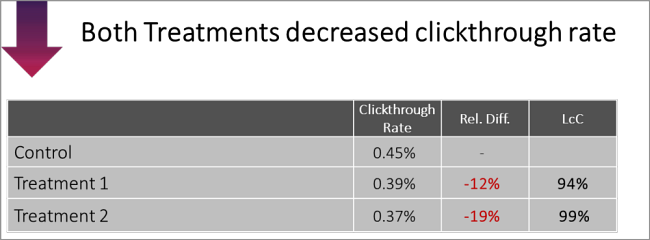

the treatments lost — again.

Click here to see the full version of this creative sample

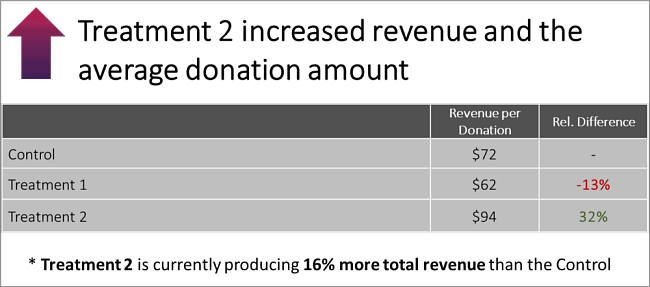

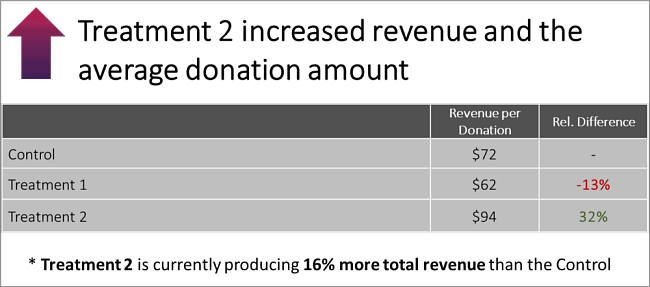

But, upon closer examination, the

treatments increased revenue per donation by 32%, with total revenue increasing by 16%.

Click here to see the full version of this creative sample

Discovery: Benefits work. Long copy, maybe not

There are two potential reasons why this result may have happened. All of them are just more hypotheses to be tested.

- Both underperforming treatments increased the length and complexity of the email (friction) by including more information. Perhaps we will see a greater increase in response as we reduce the length and complexity of the email.

- Treatment 2, which was the only treatment that revealed the $100 membership level, produced the highest overall revenue per donation. Perhaps by emphasizing the higher membership level, we will motivate prospects to donate more per donation.

Nelson believed the Summit audience did a wonderful job in choosing the treatments

"It was really fun for us," Nelson said, referring to waiting on the results, "… I was like a kid on Christmas morning."

Related Resources

Online Testing: 3 test options, 3 possible discoveries, 1 live test from Email Summit 2014 [From the MarketingExperiments blog]

Live From MarketingSherpa Summit 2016: Humana on the power of iterative testing [From the MarketingSherpa blog]

Live Experiment (Part 2): Real testing is messy [From the MarketingExperiments blog]

Email Summit 2015 Live Optimization [From MECLABS Institute]

A/B Testing: How a landing page test yielded a 6% increase in leadsLanding Page Optimization: Simple, value-infused page increases leads 8% in 24-hour test